The latest Blackwell Nvidia chip rollout is making waves. Last, the AI giant announced it had already shipped 13,000 of these powerful Blackwell Nvidia chips.

“You see now that at the tail end of the last generation of foundation models, we’re at about 100,000 Hoppers. The next generation starts at 100,000 Blackwells.” – Nvidia Founder & CEO Jensen Huang.

Blackwell Nvidia is ready to Disrupt

The Blackwell B200, a standout in the new lineup, represents a significant leap forward from Nvidia’s previous H100 AI chip. Recently, GPT-4 required over 8,000 H100 chips and swallowed over 15 megawatts of power, almost equal to 30,000 British households.

Now, here’s where the Blackwell B200 truly shines. Using these new chips, the same intensive AI training process could be accomplished with just 2,000 B200s, drawing only 4 megawatts of power. This dramatic reduction in chip count and energy consumption opens up two intriguing possibilities for the AI industry. On one hand, this increased efficiency could lead to a significant decrease in the overall electricity usage of AI training operations.

The company recently shared some fascinating insights about their new Blackwell Nvidia chip. They’re expecting the gross margins to dip a bit in the coming months compared to the impressive 73.5% they reported in Q3.

Jensen explained that Blackwell Nvidia isn’t just a standalone chip. It’s available in various configurations, including entire rack setups with additional components. This flexibility is exciting, but it also adds complexity to the production process. The giant is managing different Blackwell Nvidia configurations, seven distinct chips, a wide array of networking options, and a diverse customer base ranging from cloud providers to equipment manufacturers.

But here’s where it gets really interesting: Huang revealed that their ability to produce more Blackwell Nvidia systems isn’t just limited by their capacity. They’re also constrained by how quickly their suppliers can provide components. Some of Nvidia’s partners in the space, include Micron, TSMC, SK Hynix, Vertix, and Amphenol.

“It is the case that demand exceeds our supply, and that’s expected as we’re in the beginnings of this generative AI revolution.” — Huang noted.

Current State-of-the-Art Technology

The latest technological marvel – the Blackwell architecture. This world-changing innovation pays homage to David H. Blackwell, famous for the Rao-Blackwell Theorem, who was a brilliant American mathematician and statistician who made significant contributions to various fields, including probability theory, game theory, statistics, and dynamic programming.

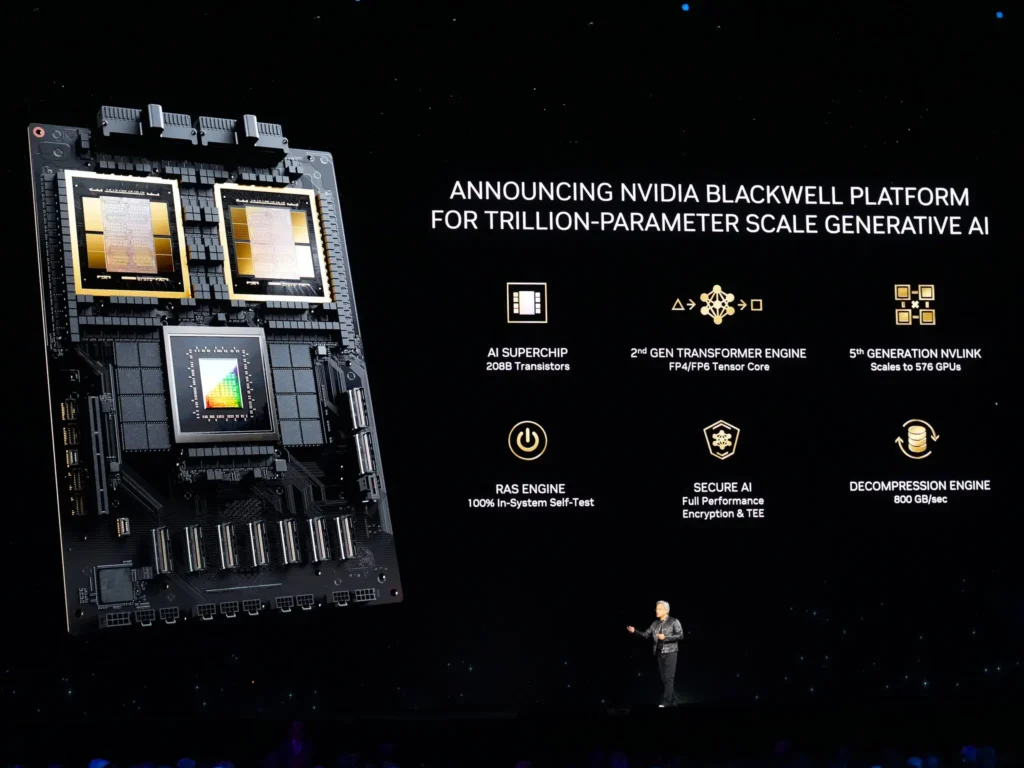

The Blackwell Nvidia architecture represents a quantum leap in GPU technology. At its core lies the world’s most powerful chip, boasting an astounding 208 billion transistors. This feat of engineering is made possible through a custom-built 4NP TSMC process, which connects two-reticle limit GPU dies via a lightning-fast 10 TB/second chip-to-chip link, creating a unified GPU of unprecedented power.

TSMC has unveiled its N4P process, an enhanced version of its 5-nanometer technology. This new process promises some impressive improvements: an 11% performance boost over the original N5 tech, 22% better power efficiency, and a 6% increase in transistor density. It’s a significant step forward in chip manufacturing.

But the innovations don’t stop there. The second-generation Transformer Engine introduces micro-tensor scaling support and advanced dynamic range management algorithms. These enhancements, integrated into NVIDIA’s TensorRT-LLM and NeMo Megatron frameworks, enable the Blackwell Nvidia architecture to handle double the compute and model sizes. Plus, with new 4-bit floating point AI inference capabilities, it’s pushing the boundaries of what’s possible in artificial intelligence.

Communication between GPUs is crucial for handling complex AI models, and the fifth-generation NVLink delivers on this front spectacularly. With a jaw-dropping 1.8TB/s bidirectional throughput per GPU, it enables seamless high-speed communication among up to 576 GPUs. This capability is essential for tackling multitrillion-parameter and mixture-of-experts AI models, opening up new possibilities in large language model development.

Reliability is a major concern in any high-performance computing space, and Nvidia has solved this with a dedicated RAS (Reliability, Availability, and Serviceability) engine in Blackwell Nvidia GPUs. This engine goes beyond traditional reliability measures by incorporating AI-based preventative maintenance. It can run diagnostics and forecast potential issues, ensuring that massive-scale AI deployments can operate uninterrupted for extended periods – we’re talking weeks or even months. This makes it perfect for complex AI operations.

The new Blackwell Nvidia GPUs are set to power the next wave of AI innovation. Tech giants like Amazon, Google, Microsoft, Oracle, Tesla, xAI, and OpenAI are already on board. This means Nvidia will play a crucial role in shaping AI’s future.

The Market is Screaming the Name

Mark Zuckerberg, founder and CEO of Meta: “AI already powers everything from our large language models to our content recommendations, ads, and safety systems, and it’s only going to get more important in the future. We’re looking forward to using NVIDIA’s Blackwell to help train our open-source Llama models and build the next generation of Meta AI and consumer products.”

Cloud providers are jumping in too. AWS, Google Cloud, Microsoft Azure, and Oracle will be among the first to offer Blackwell Nvidia-powered services. Other cloud companies, including IBM Cloud and Lambda, are also joining the party.

Leading cloud providers AWS, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure will soon offer Blackwell-powered instances. NVIDIA Cloud Partner companies, including Applied Digital, CoreWeave, Crusoe, IBM Cloud, Lambda, and Nebius, are also set to provide these advanced services.

The rollout extends to sovereign AI clouds, with Indosat Ooredoo Hutchinson, Nexgen Cloud, Oracle EU Sovereign Cloud, Oracle’s government clouds for the US, UK, and Australia, Scaleway, Singtel, Northern Data Group’s Taiga Cloud, Yotta Data Services’ Shakti Cloud, and YTL Power International all planning to introduce Blackwell-based cloud services and infrastructure. This shows the global reach and diverse applications of Nvidia’s latest offering.